Brain visualization prototype holds promise for precision medicine

The ability to combine all of a patient's neurological test results into one detailed, interactive "brain map" could help doctors diagnose and tailor treatment for a range of neurological disorders, from autism to epilepsy. But before this can happen, researchers need a suite of automated tools and techniques to manage and make sense of these massive complex datasets.

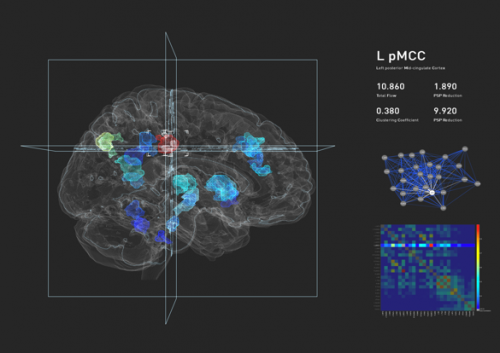

To get an idea of what these tools would look like, computational researchers from the Lawrence Berkeley National Laboratory (Berkeley Lab) are working with neuroscientists from the University of California, San Francisco (UCSF). So far, the Berkeley Lab team has used existing computational tools to translate UCSF laboratory data into 3D visualizations of brain structures and activity. Earlier this year, Los Angeles-based Oblong Industries joined the collaboration and implemented a state-of-the-art, gesture-based navigation interface that allows researchers to interactively explore 3D brain visualizations with hand poses movements.

Researchers from Berkeley Lab, UCSF and Oblong Industries presented a prototype of their brain simulation and innovative navigation interface at UCSF's OME Precision Medicine Summit on Thursday, May 2.

"The collaboration with Oblong will make our visualizations much more powerful and relevant to precision medicine," says Daniela Ushizima, a Berkeley Lab computational researcher who is one of the collaboration's principal investigators. "This collaboration gives us the opportunity to have tools to browse big data sets at our fingertips, literally."

Designed to generate actionable projects and collaborations, the OME Precision Medicine Summit brought together leaders in health, bioscience, technology, government and other fields to lay out a roadmap and remove barriers for the evolving field known as precision medicine. The field of precision medicine will allow future doctors to cross-reference an individual's personal history and biology with patterns found worldwide and use that network of knowledge to pinpoint and deliver care that's preventive, targeted, timely and effective.

The future: Tackling neuroimaging's big data problem

According to Ushizima, the brain visualization prototype provides just a small glimpse of what the collaboration hopes to achieve. Ultimately, they would like to incorporate chemical elements captured by Positron Emission Tomography (PET) scans, electrical brain activity captured by Functional Magnetic Resonance Imaging (fMRI) and anatomical structure as captured by T1, T2 and other MRI scans.

As the collaboration continues, scientists in Berkeley Lab's Visualization and Analytics Group hope to develop tools and techniques for imaging processing and analysis. This team will also develop methods for visualizing and comparing different modalities of brain data, for instance, figuring out how to compare an anatomical brain region (like the frontal cortex) with correlating chemical activity.

Meanwhile, researchers in the Berkeley Lab's Future Technologies, Scientific Computing and Complex Systems groups will use graph analytics and image analysis algorithms to quantify and visualize this "multi-modal" data, giving researchers the flexibility to look at regions of interest by displaying electrical, anatomical and chemical activity. By representing brain data on dynamical graphs, neuroscientists will be able to see how different parts of the brain correlate with each other. They will also be able to identify and track temporal changes, or changes over time.

"The technologies that exist for imaging the brain are very advanced and diverse. We have machines that provide extremely high-throughput, high-definition images of the brain in 3D, but unfortunately the tools to analyze this information have not advanced as quickly," says Ushizima.

She notes that a relatively small amount of data collected from these imaging machines requires some level of manual curation, a process that can take anywhere from six months to a year. By automating and parallelizing this process, Ushizima believes this collaboration could change the paradigm.