How non-verbal cues can predict person's—and robot's—trustworthiness

People face this predicament all the time—can you determine a person's character in a single interaction? Can you judge whether someone you just met can be trusted when you have only a few minutes together? And if you can, how do you do it?

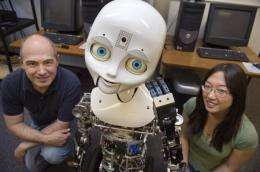

Using a robot named Nexi, Northeastern University psychology professor David DeSteno and collaborators Cynthia Breazeal from MIT's Media Lab and Robert Frank and David Pizarro from Cornell University have figured out the answer. The findings were recently pub¬lished in the journal Psychological Science, a journal of the Association for Psychological Science.

It's What You're Not Saying…

In the absence of reliable information about a person's reputation, nonverbal cues can offer a look into a person's likely actions. This concept has been known for years, but the cues that convey trustworthiness or untrustworthiness have remained a mystery. Collecting data from face-to-face conversations with research participants where money was on the line, DeSteno and his team realized that it's not one single non-verbal movement or cue that determines a person's trustworthiness, but rather sets of cues. When participants expressed these cues, they cheated their partners more, and, at a gut level, their partners expected it. "Scientists haven't been able to unlock the cues to trust because they've been going about it the wrong way," DeSteno said. "There's no one golden-cue. Context and coordination of movements is what matters."

Robots Have Feelings, Too

People are fidgety – they're moving all the time. So how could the team truly zero-in on the cues that mattered? This is where Nexi comes in. Nexi is a humanoid social robot that afforded the team an important benefit – they could control all its movements perfectly. In a second experiment, the team had research participants converse with Nexi for 10 minutes, much like they did with another person in the first experiment. While conversing with the participants, Nexi—operated remotely by researchers—either expressed cues that were considered less than trustworthy or expressed similar, but non-trust-related cues. Confirming their theory, the team found that participants exposed to Nexi's untrustworthy cues intuited that Nexi was likely to cheat them and adjusted their financial decisions accordingly. "Certain nonverbal gestures trigger emotional reactions we're not consciously aware of, and these reactions are enormously important for understanding how interpersonal relationships develop," said Frank. "The fact that a robot can trigger the same reactions confirms the mechanistic nature of many of the forces that influence human interaction."

Real-Life Application

This discovery has led the research team to not only answer enduring questions about if and how people are able to assess the trustworthiness of an unknown person, but also to show the human mind's willingness to ascribe trust-related intentions to technological entities based on the same movements. "This is a very exciting result that showcases how social robots can be used to gain important insights about human behavior," said Cynthia Breazeal of MIT's Media Lab. "This also has fascinating implications for the design of future robots that interact and work alongside people as partners." Accordingly, these findings hold important insights not only for security and financial endeavors and for the evolving design of robots and computer-based agents. The subconscious mind is ready to see these entities as social beings.