Learning how to listen with neurofeedback

When listening to music or learning a new language, auditory perceptual learning occurs: a process in which your recognition of specific sounds improves, making you more efficient in processing and interpreting them. Neuroscientist Alex Brandmeyer shows that auditory perceptual learning can be facilitated using neurofeedback, helping to focus on the sound differences that really matter. On 19 March, he will receive his doctorate from Radboud University Nijmegen.

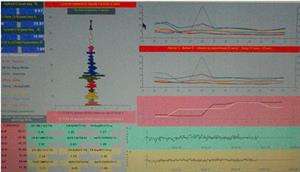

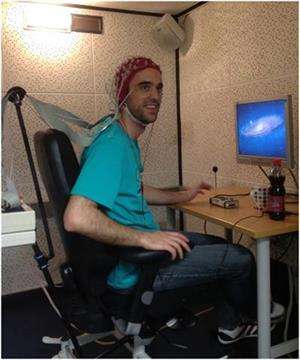

By presenting your brain activity as visual, sound or haptic feedback, neurofeedback allows you to regulate it while it is recorded. Brandmeyer used electroencephalography (EEG) to record brain activity of research participants while they listened to sounds. The measurements were visualised as changes in the clarity of films viewed by participants. Increases in clarity corresponded to enhancements of specific patterns of brain activity underlying auditory perception of the sounds. Participants were encouraged to adjust their listening strategies in order to improve the neurofeedback signal.

Importance of the mother tongue

During his PhD at the Donders Institute of Radboud University Nijmegen, Brandmeyer investigated the differences in brain activity between native Dutch and English speakers while they listened to English sounds. Although the Dutch participants were fluent in English, their brains showed different responses to English sounds than those of native English speakers. According to Brandmeyer, this shows how subjectively we deal with sound: 'Some sound contrasts are important in one language but not in the other. These differences arise because our brains develop in a specific linguistic environment.' For instance, the vowel in the first syllable of the words 'cattle' or 'kettle' sounds the same for Dutch listeners , but not for native born English listeners.

Learning how to listen

Brandmeyer also explored how we listen to music. During four sessions over the course of a week, test subjects had to listen to simple sounds with various pitches and distinguish them from one another. Additionally, half of the subjects received neurofeedback training based on their own brain activity, while the other half received fake neurofeedback. In the first group, the measured brain responses were enhanced during the training sessions relative to the control group. 'Longer periods of neurofeedback training could well lead to stronger perceptual learning effects', says Brandmeyer, 'but this requires more research in the future.'

In his thesis, Brandmeyer presents methods to make neurofeedback applicable for brain-computer interfaces (BCIs): software that you control with brain activity. He performed his research at the department of Cognitive Artificial Intelligence at the Donders Institute for Brain, Cognition and Behaviour of Radboud University Nijmegen. Brandmeyer is currently employed as a postdoctoral researcher at the Max Planck Institute for Human Cognitive and Brain Sciences in Leipzig, where he focuses on the neural mechanisms underlying auditory scene analysis. This is the process through which mixtures of sound can be perceived as coming from distinct objects in the environment, for instance when you are in a room with multiple people talking simultaneously.