Neurobiologist studies how the brain learns to interpret what the body touches

It's a touchy subject—literally. Samuel Andrew Hires, assistant professor of biological sciences, wants to know how the brain learns to understand what we're touching. Understanding how this works could one day be a boon for people who have suffered a stroke or spinal cord injury.

Hires recently received a five-year, $2.5 million New Innovator Award from the National Institutes of Health to advance the research. The award is the first of its kind obtained by a USC Dornsife researcher, and only the third overall for USC.

Glowing brain cells

Using technology he began developing while a postdoctoral associate at Howard Hughes Medical Institute's Janelia Research Campus in Ashburn, Va., Hires aims to map the neural circuits in the brain that interpret touch sensation and learn from it.

"All of our actions require a constant communication between our body and our brain to coordinate them, and the messages are encoded by electrical signals," he said. "Signals coming from your body are translated and interpreted in the brain."

Hires wants to understand how those signals are represented in the brain. Which neurons and networks of neurons become active while learning what something feels like? And how do they organize themselves as part of that process?

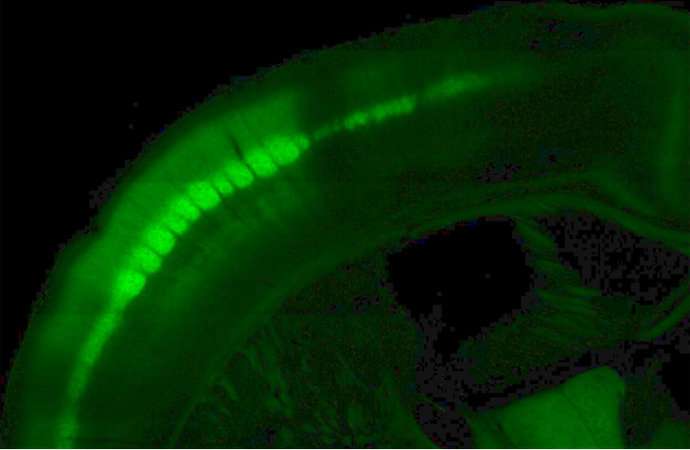

To answer this, he genetically engineered mice so that neurons in their brains glow green when they're active. He then challenges the mice to learn the difference between two different shapes. The hitch, of course, is that the mice can't see the objects because they're in a dark box. They can only use their whiskers to make out the shapes.

"Mouse whiskers aren't like the ones dogs and cats have, or our whiskers," Hires said. In fact, mice use their whiskers much as we use our hands and fingers; they're the primary touch organ. "They brush their whiskers across objects actively like we sweep our fingers across a wall to find a light switch."

As the mice learn to judge the shapes, Hires analyzes which groups of neurons glow green in their brains. "It looks almost like a constellation or maybe a very dense Christmas tree of individual neurons blinking," he said. The technology enables him to look at the activity patterns of thousands of neurons at a time or zoom in to the level of individual neurons.

"That gives us a much clearer and faster picture … that allows us to start understanding what the brain is actually doing in there," he said.

Combining this brain imagery with high-speed video of the mouse whiskers will allow Hires to correlate the mice's efforts to probe the shape with what is happening in their brains. This will paint a complete picture of how nerve signals from touch sensors and those that control muscle interact, and how the brain translates and coordinates all of the information.

Though the research is in its earliest stages, the work one day could lead to methods of restoring touch and motor control to stroke and spinal cord injury patients. Nearly 800,000 people in the United States have a stroke each year and about 12,000 suffer a spinal cord injury.

"If we can understand the rules by which [brain] circuits organize, we may be able to coax them to do it using medicines or other methods." This could stimulate the brain to form the patterns normally seen during touch and motor control, effectively reinstating activity that's been felled by stroke or injury.

Future bionics?

Looking far into the future, Hires' work might one day even let amputees feel again using prosthetic devices.

In much the same way he engineered mouse brain cells to glow when active, it's possible to give neurons a protein that responds to light. The protein, called channelrhodopsin, is related to the light-sensitive receptors in the eye. Shining light on it activates the neuron.

If neurons have this light-reactive protein, and Hires has successfully mapped the neurons and neural circuits that allow the brain to interpret and react appropriately to touch, then theoretically it is possible to fool the brain into "feeling." In fact, Hires has done this for mice, making them think they've touched something that isn't there or that an object is in a different position than it is.

Doing this for humans is a very long way off, but it's not out of the question. The distant future could see touch sensors in a prosthetic limb send signals to light-emitting hardware in the brain that then targets the appropriate neural circuits and activates them.

"This is, of course, science fiction at the moment," Hires said. "But it's good to dream. That's how ideas are born."