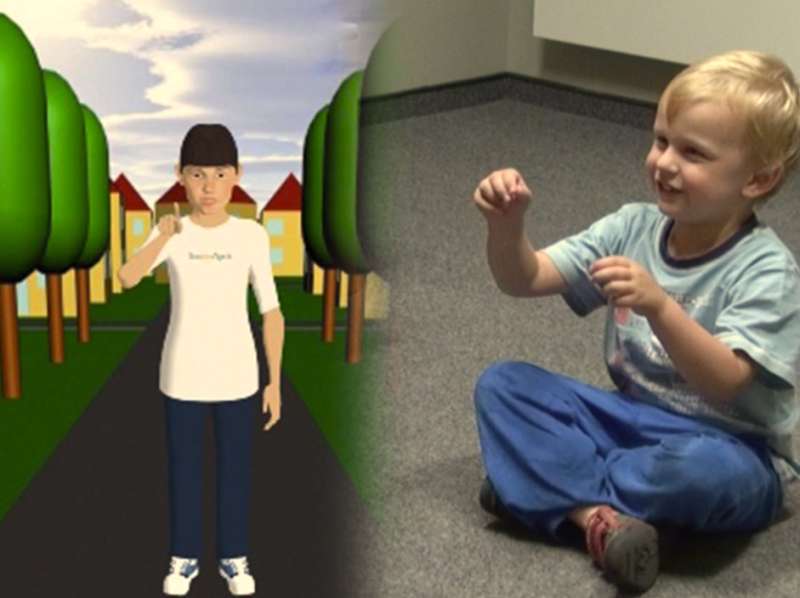

A model demonstrates the gestures that children use to complement their verbal statements. Credit: CITEC/Universität Bielefeld

What role does gesturing play as children learn to speak? What gestures do they use to complement their verbal statements? Can computer-assisted models of language acquisition explain different types of gestures? In the new project EcoGest, researchers are investigating how children's use of gestures is connected to communication. Researchers from the Cluster of Excellence Cognitive Interaction Technology (CITEC) and collaborators are working together on the project.

"With a computer-assisted model, we want to simulate how children of a certain age use gestures when speaking," says Professor Dr. Stefan Kopp from CITEC. Toward this goal, his colleagues from linguistics will investigate how the use of gestures is integrated in different interactional and cognitive demands.

"When children speak, they use various types of gestures. In this project, iconic gestures take center stage. These gestures pertain to the forms, functions, and movements of objects," says Professor Dr. Katharina Rohlfing, a psycholinguist at Paderborn University and an associate member of CITEC.

Gestures go hand-in-hand with speech. Yet these gestures differ depending on the particular linguistic delivery. "A linguistic task makes different demands on children, which they manage in part by using verbal and gesture-based means," says Professor Dr. Friederike Kern, from the Faculty of Linguistics and Literary Studies at Bielefeld University.

Over a period of three years, the researchers will record children aged four to five years old in various communicative situations. The children will be tasked, for instance, with explaining an activity, or telling a story. The researchers will then observe the context and grammatical constructions for which the children use gestures, along with what gestures they use.

This large, empirical pool of data will provide the foundation for quantitative and qualitative analyses of children's gesturing, as well as for computer-based modeling. "Taking the form of a virtual child, the computer model is meant to reproduce what we observe in the children, but also to produce new things," says Kopp. "How does gesturing develop, for example, if the virtual child is five years old and speaks comparatively little? Or, what happens when the model is confronted with a situation that we couldn't see from the data? Based on this, we can draw conclusions about the development of speech and gesturing in children." For this model, Kopp and his colleagues are developing a virtual working memory for gesturing and a conceptual working memory for speech. Using these, the cognitive processes involved in language acquisition can be modeled.

Provided by Bielefeld University