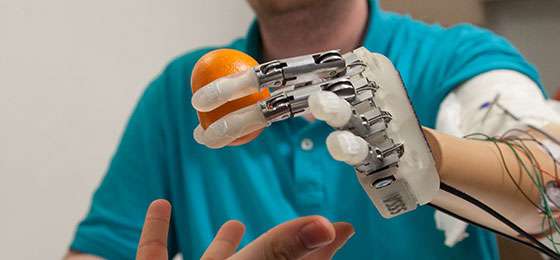

Credit: Swiss National Science Foundation

Most amputees use purely aesthetic prostheses. They find it difficult to accept a robotic limb that is not only by and large complicated to use but also has somewhat unnatural motion. Most of the models on the market today can only execute a few simple gestures, for example opening and closing the fist, and often in a very jarring way. Furthermore, users can't always properly control the magnitude of the movement, which adds a safety risk to the mixture.

Scientists are therefore striving to bring prosthetic movements closer to those of the human body by using machine learning, a technique also used in artificial intelligence. Thanks to algorithms, prostheses are learning to carry out the right movements on the basis of observing natural ones.

Studying gestures

At the Institute of Information Systems at HES-SO Valais-Wallis, Sierre, Henning Müller is compiling the largest ever database of hand movements and making it available to the scientific community. It currently contains some 50 gestures measured from 78 subjects, both amputees and otherwise. "We have joined forces with physiotherapists who work with amputees on a daily basis", explains Müller. "This data will allow us to create algorithms that will increase prosthetic dexterity. This in turn will make the movements more acceptable to patients".

Another aspect of the project is better understanding the neuropsychological mechanisms at play. "We don't yet know the effect of an amputation on the brain", says Müller. "But this is key to creating intelligent prosthetics that patients will want to accept as part of their bodies". He is also trying to understand why some people can use their prosthetic limb better than others. What he has found so far is that the precision of movements increases with the age of the amputation and with the intensity of phantom limb pain (which is linked to the loss of a limb). The answer is probably related to the greater nervous-system connectivity.

Error-driven learning

Machine learning is also at the heart of José Millán's work. In 2010, this EPFL researcher developed a wheelchair that was driveable using thought. It used an electrode-filled headset that measured the brain's neural impulses. He has since developed further brain-machine interfaces that learn for themselves how to manipulate a robotic arm correctly. "The brain also emits a specific electronic impulse when we fail to make a movement", he explains. His device decodes an error signal and transmits it to the artificial arm, which distinguishes between correct or incorrect movements, and in that way creates a database of actions. "This approach allows us to obtain results more quickly. Without it, the patient has to learn the equivalent of a new motor skill, which takes much more time, as our experience as children shows".

Other researchers are using implants that place the machine directly between the inner workings of the brain and the peripheral nerves of the arm. For example, Silvestro Micera (EPFL Center for Neuroprosthetics) first succeeded in restoring the sense of touch to an amputee in 2014. The artificial hand transforms sensorial information into an electric current which is converted into nerve impulses by electrodes grafted into the patient's arm. Micera is convinced that in future all prosthetics will be connected to an implant. "For a patient to integrate their prosthesis, it's important for them to have natural senses, and we get the best results with implants". One fundamental question remains, however: will amputees accept an artificial limb integrated so intimately into the body?

More information: Manfredo Atzori et al. Effect of clinical parameters on the control of myoelectric robotic prosthetic hands, Journal of Rehabilitation Research and Development (2016). DOI: 10.1682/JRRD.2014.09.0218

Provided by Swiss National Science Foundation