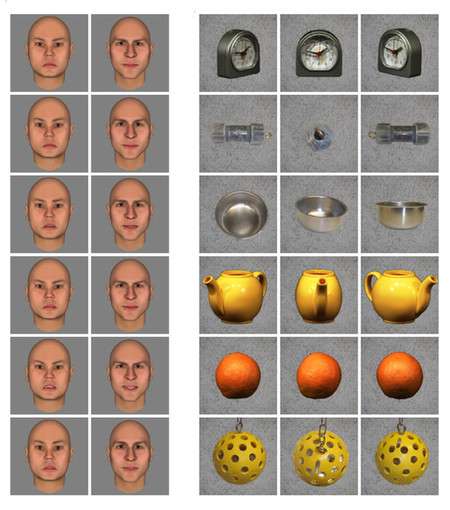

Images shown to test face patch activity. Face patches are regions in the brain that respond strongly to faces. Additionally, they have a smaller response to objects like clocks and apples. These responses are used to shape our perceptions. Credit: D. Tsao

The human brain is constantly abuzz with electrical activity as brain cells, called neurons, respond to sensory input and give rise to the world we perceive. Six particular regions of the brain, called face patches, contain neurons that respond more to faces than to any other type of object. New research from Caltech shows how perturbations in these face cells alter perception, answering a longstanding question in cognitive science.

The research was led by Doris Tsao (BS '96), professor of biology, Tianqiao and Chrissy Chen Center for Systems Neuroscience Leadership Chair, and Howard Hughes Medical Institute Investigator. A paper describing the work appeared online in the March 13 issue of Nature Neuroscience.

"Face cells will produce the maximum response when a subject is observing faces, but they will also produce a small amount of activity when a subject is looking at round objects like an apple or a clock," says Tsao. "There has been a long debate in cognitive science: Is the brain actually using these small responses to generate perception? Do face cells help us perceive clocks and apples?"

Tsao and her team aimed to test this by altering the perception of a trained monkey. First, the monkey was trained to look left if the two images were identical, and to look right if they were different. Then, the researchers showed the monkey two identical images of faces, but while it was viewing the second one, the researchers stimulated specific face patches. The monkey's response changed dramatically: the animal almost always indicated that the two identical faces were different—implying that the activity of face patch neurons plays a large role in generating our perception of faces. Surprisingly, the group found that stimulating face cells also had a significant effect on the perception of certain other objects.

Cartoon faces, left, and Mooney faces, right. These were shown to the subjects to measure face patch activity. Credit: D. Tsao

"There was a study a few years ago in humans, where a neurosurgeon stimulated the face area of a person, and the person exclaimed, 'Whoa! Your face just metamorphosed,'" says Tsao. "Our study systematically perturbed each patch in the face patch network with a much larger set of stimuli, and we found that we could also perturb the perception of cartoon faces, two-toned abstract images called Mooney faces, and even apples. So we've shown that the class of objects that can be affected by face patch stimulation is quite large, much larger than only realistic photographs of faces. Even more surprisingly, we found that some of the objects whose percept we could alter with stimulation did not even activate the face patch we were stimulating—the face patch neurons were completely silent to these objects."

What could explain this? The group discovered that the face patch located most posterior in the temporal lobe, thought to harbor the earliest stage of face processing, was more permissive and activated by the objects whose percept could be altered by face patch stimulation. Patches that were in a more anterior part of the temporal lobe were more specialized for faces. These findings show that while face patches play a large causal role in generating the perception of faces, these regions are also part of a complex network involved in processing a much larger class of objects. The results set the stage for future experiments to study how activity across the entire network is integrated to produce perception of an object.

The work may have applications for engineers who design algorithms to teach machines to recognize objects.

"Machine vision engineers often ask: how many specialized networks do we need for robust object vision?" Tsao says. "Do we need a special network to recognize purses, shoes, cars, food? Or can we have one general purpose network? Our results suggest that the answer is a hybrid, and this may have important engineering implications."

More information: Sebastian Moeller et al. The effect of face patch microstimulation on perception of faces and objects, Nature Neuroscience (2017). DOI: 10.1038/nn.4527

Journal information: Nature Neuroscience

Provided by California Institute of Technology