Face identification accuracy is in the eye and brain of the beholder, researchers say

Though humans generally have a tendency to look at a region just below the eyes and above the nose toward the midline when first identifying another person, a small subset of people tend to look further down –– at the tip of the nose, for instance, or at the mouth. However, as UC Santa Barbara researchers Miguel Eckstein and Matthew Peterson recently discovered, "nose lookers" and "mouth lookers" can do just as well as everyone else when it comes to the split-second decision-making that goes into identifying someone. Their findings are in a recent issue of the journal Psychological Science.

"It was a surprise to us," said Eckstein, professor in the Department of Psychological & Brain Sciences, of the ability of that subset of "nose lookers" and "mouth lookers" to identify faces. In a previous study, he and postdoctoral researcher Peterson established through tests involving a series of face images and eye-tracking software that most humans tend to look just below the eyes when identifying another human being and when forced to look somewhere else, like the mouth, their face identification accuracy suffers.

The reason we look where we look, said the researchers, is evolutionary. With survival at stake and only a limited amount of time to assess who an individual might be, humans have developed the ability to make snap judgments by glancing at a place on the face that allows the observer's eye to gather a massive amount of information, from the finer features around the eyes to the larger features of the mouth. In 200 milliseconds, we can tell whether another human being is friend, foe, or potential mate. The process is deceptively easy and seemingly negligible in its quickness: Identifying another individual is an activity on which we embark virtually from birth, and is crucial to everything from day-to-day social interaction to life-or-death situations. Thus, our brain devotes specialized circuitry to face recognition.

"One of, if not the most, difficult task you can do with the human face is to actually identify it," said Peterson, explaining that each time we look at someone's face, it's a little different –– perhaps the angle, or the lighting, or the face itself has changed –– and our brains constantly work to associate the current image with previously remembered images of that face, or faces like it, in a continuous process of recognition. Computer vision has nowhere near that capacity in identifying faces, yet.

So it would seem to follow that those who look at other parts of a person's face might perform less well, and might be slower to recognize potential threats, or opportunities.

Or so the researchers thought. In a series of tests involving face identification tasks, the researchers found a small group that departed from the typical just-below-the-eyes gaze. The observers were Caucasian, had normal or corrected to normal vision, and no history of neurological disorders –– all qualities which controlled for cultural, physical, or neurological elements that could influence a person's gaze.

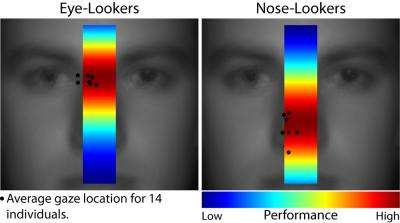

But instead of performing less well, as would have been predicted by the theoretical analysis of the investigators, the participants were still able to identify faces with the same degree of accuracy as just-below-the-eyes lookers. Furthermore, when these nose-looking participants were forced to look at the eyes to do the identification, their accuracy degraded.

The findings both fascinate and set up a chicken-and-egg scenario for the researchers. One possibility is that people tailor their eye movement to the properties of their visual system –– everything from their eye structures to the brain functions they are born with and develop. If, for example, one is able to see well in the upper visual field (the region above where they look), they can afford to look lower on the face without losing the detail around the eyes when identifying someone. According to Eckstein, it is known that most humans tend to see better in the lower visual field.

The other possibility is the reverse –– that our visual systems adapt to our looking behavior. If at an early age a person developed the habit of looking lower on the face to identify someone else, their visual system over time brain circuits specialized for face identification could develop and arrange itself around that tendency.

"The main finding is that people develop distinct optimal face-looking strategies that maximize face identification accuracy," said Peterson. "In our framework, an optimized strategy or behavior is one that results in maximized performance. Thus, when we say that the observer-looking behavior was self-optimal, it refers to each individual fixating on locations that maximize their identification accuracy."

Future research will delve deeper into the mechanisms involved in those who look lower on the face to determine what could drive that gaze pattern and what information is gathered.