This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

AI model can accurately diagnose and triage health conditions, without introducing racial and ethnic biases

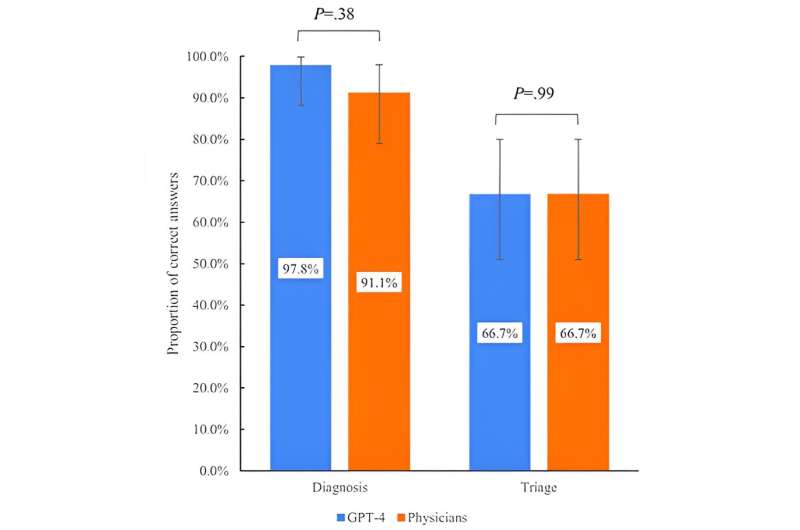

GPT-4 conversational artificial intelligence (AI) has the ability to diagnose and triage health conditions comparable to that provided by board-certified physicians, and its performance does not vary by patient race and ethnicity.

While GPT-4, a conversational artificial intelligence, "learns" from information on the internet, the accuracy of this form of AI for diagnosis and triage, and whether AI's recommendations include racial and ethnic biases possibly gleaned from that information, have not been investigated even as the technology's use in health care settings has grown in recent years.

The researchers compared how GPT-4 and three board-certified physicians diagnosed and triaged health conditions using 45 typical clinical vignettes to determine how each provided the most likely diagnosis and decided which of the triage levels—emergency, non-emergency, or self-care—was most appropriate.

The study has some limitations. While based on real-world cases, the clinical vignettes provided only summary information for diagnosis, which may not reflect clinical practice that typically gives patients more detailed information. In addition, the GPT-4 responses may depend on how the queries are worded and the GPT-4 may have learned from the clinical vignettes this study used. Also, the findings may not be applicable to other conversational AI systems.

Health systems can use the findings to introduce conversational AI to improve patient diagnosis and triage efficiently.

"The findings from our study should be reassuring for patients because they indicate that large language models like GPT-4 show promise in providing accurate medical diagnoses without introducing racial and ethnic biases," said senior author Dr. Yusuke Tsugawa, associate professor of medicine in the division of general internal medicine and health services research at the David Geffen School of Medicine at UCLA.

"However, it is also important for us to continuously monitor the performance and potential biases of these models as they may change over time depending on the information fed to them."

The study is published in JMIR Medical Education

More information: Naoki Ito et al, The Accuracy and Potential Racial and Ethnic Biases of GPT-4 in the Diagnosis and Triage of Health Conditions: Evaluation Study, JMIR Medical Education (2023). DOI: 10.2196/47532