This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

Who's to blame when AI makes a medical error?

In the realm of gastrointestinal (GI) endoscopy, artificial intelligence (AI) is becoming an essential tool, especially in the computer-aided detection of precancerous colon polyps during screening colonoscopy. This integration marks a significant advancement in gastroenterology care. However, the inevitability of errors persists, and in some cases, AI algorithms themselves could contribute to medical errors.

To address this, physician-scientists at the Center for Advanced Endoscopy at Beth Israel Deaconess Medical Center (BIDMC), in collaboration with legal experts from Pennsylvania State University and Maastricht University, are pioneering efforts to develop guidelines on medical liability for AI use in GI endoscopy.

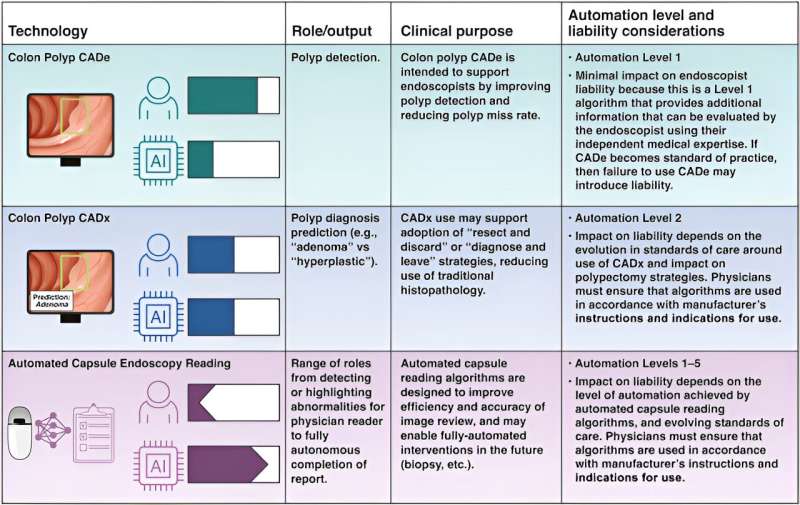

A recent paper, led by BIDMC gastroenterologists Sami Elamin, MD, and Tyler Berzin, MD, and published in Clinical Gastroenterology and Hepatology, represents the first international effort to explore the legal implications of AI in GI endoscopy from the perspective of both gastroenterologists and legal scholars. Berzin, an advanced endoscopist at BIDMC and Associate Professor of Medicine at Harvard Medical School, has led several of the early national and international studies exploring the role of AI for precancerous colon polyp detection, a "level 1" assistive algorithm.

However, AI tools are soon poised to advance beyond just polyp detection and may soon play a role in predicting polyp diagnoses, potentially replacing the need for tissue biopsy in certain cases. The authors suggest that even higher levels of automation are both technically feasible and imminent, potentially providing assisting physicians with automated endoscopy reports and recommendations.

Lead author Elamin, a clinical fellow in Gastroenterology at BIDMC and Harvard Medical School, used hypothetical scenarios to explore the potential legal accountability of individual physicians or health care organizations for a variety of potential AI-generated errors that could occur in the field of GI endoscopy.

The degree of legal responsibility for AI errors, the authors conclude, will depend on how these tools are integrated into clinical practice and the level of automation of the algorithms. To ensure the safety, proper implementation, and monitoring of these AI tools, collaboration among hospitals, medical groups, and gastroenterologists is crucial. Specialty societies and health care organizations must establish guidelines for physician oversight of AI tools at various automation levels.

For physicians, meticulous clinical documentation—whether they adhere to or deviate from AI recommendations—remains a cornerstone in minimizing liability risks.

More information: Sami Elamin et al, Artificial Intelligence and Medical Liability in Gastrointestinal Endoscopy, Clinical Gastroenterology and Hepatology (2024). DOI: 10.1016/j.cgh.2024.03.011