New algorithm greatly improves speed and accuracy of thought-controlled computer cursor

Stanford researchers have designed the fastest, most accurate algorithm yet for brain-implantable prosthetic systems that can help disabled people maneuver computer cursors with their thoughts. The algorithm's speed, accuracy and natural movement approach those of a real arm, and the system avoids the long-term performance degradations of earlier technologies.

When a paralyzed person imagines moving a limb, cells in the part of the brain that controls movement still activate as if trying to make the immobile limb work again. Despite neurological injury or disease that has severed the pathway between brain and muscle, the region where the signals originate remains intact and functional.

In recent years, neuroscientists and neuroengineers working in prosthetics have begun to develop brain-implantable sensors that can measure signals from individual neurons, and after passing those signals through a mathematical decode algorithm, can use them to control computer cursors with thoughts. The work is part of a field known as neural prosthetics.

A team of Stanford researchers have now developed an algorithm, known as ReFIT, that vastly improves the speed and accuracy of neural prosthetics that control computer cursors. The results are to be published November 18 in the journal Nature Neuroscience in a paper by Krishna Shenoy, a professor of electrical engineering, bioengineering and neurobiology at Stanford, and a team led by research associate Dr. Vikash Gilja and bioengineering doctoral candidate Paul Nuyujukian.

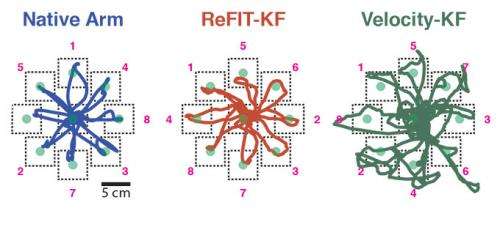

In side-by-side demonstrations with rhesus monkeys, cursors controlled by the ReFIT algorithm doubled the performance of existing systems and approached performance of the real arm. Better yet, more than four years after implantation, the new system is still going strong, while previous systems have seen a steady decline in performance over time.

"These findings could lead to greatly improved prosthetic system performance and robustness in paralyzed people, which we are actively pursuing as part of the FDA Phase-I BrainGate2 clinical trial here at Stanford," said Shenoy.

Sensing mental movement in real time

The system relies on a silicon chip implanted into the brain, which records "action potentials" in neural activity from an array of electrode sensors and sends data to a computer. The frequency with which action potentials are generated provides the computer key information about the direction and speed of the user's intended movement.

The ReFIT algorithm that decodes these signals represents a departure from earlier models. In most neural prosthetics research, scientists have recorded brain activity while the subject moves or imagines moving an arm, analyzing the data after the fact. "Quite a bit of the work in neural prosthetics has focused on this sort of offline reconstruction," said Gilja, the first author of the paper.

The Stanford team wanted to understand how the system worked "online," under closed-loop control conditions in which the computer analyzes and implements visual feedback gathered in real time as the monkey neurally controls the cursor to toward an onscreen target.

The system is able to make adjustments on the fly when while guiding the cursor to a target, just as a hand and eye would work in tandem to move a mouse-cursor onto an icon on a computer desktop. If the cursor were straying too far to the left, for instance, the user likely adjusts their imagined movements to redirect the cursor to the right. The team designed the system to learn from the user's corrective movements, allowing the cursor to move more precisely than it could in earlier prosthetics.

To test the new system, the team gave monkeys the task of mentally directing a cursor to a target—an onscreen dot—and holding the cursor there for half a second. ReFIT performed vastly better than previous technology in terms of both speed and accuracy. The path of the cursor from the starting point to the target was straighter and it reached the target twice as quickly as earlier systems, achieving 75 to 85 percent of the speed of real arms.

"This paper reports very exciting innovations in closed-loop decoding for brain-machine interfaces. These innovations should lead to a significant boost in the control of neuroprosthetic devices and increase the clinical viability of this technology," said Jose Carmena, associate professor of electrical engineering and neuroscience at the University of California Berkeley.

A smarter algorithm

Critical to ReFIT's time-to-target improvement was its superior ability to stop the cursor. While the old model's cursor reached the target almost as fast as ReFIT, it often overshot the destination, requiring additional time and multiple passes to hold the target.

The key to this efficiency was in the step-by-step calculation that transforms electrical signals from the brain into movements of the cursor onscreen. The team had a unique way of "training" the algorithm about movement. When the monkey used his real arm to move the cursor, the computer used signals from the implant to match the arm movements with neural activity. Next, the monkey simply thought about moving the cursor, and the computer translated that neural activity into onscreen movement of the cursor. The team then used the monkey's brain activity to refine their algorithm, increasing its accuracy.

The team introduced a second innovation in the way ReFIT encodes information about the position and velocity of the cursor. Gilja said that previous algorithms could interpret neural signals about either the cursor's position or its velocity, but not both at once. ReFIT can do both, resulting in faster, cleaner movements of the cursor

An engineering eye

Early research in neural prosthetics had the goal of understanding the brain and its systems more thoroughly, Gilja said, but he and his team wanted to build on this approach by taking a more pragmatic engineering perspective. "The core engineering goal is to achieve highest possible performance and robustness for a potential clinical device, " he said.

To create such a responsive system, the team decided to abandon one of the traditional methods in neural prosthetics. Much of the existing research in this field has focused on differentiating among individual neurons in the brain. Importantly, such a detailed approach has allowed neuroscientists to create a detailed understanding of the individual neurons that control arm movement.

The individual neuron approach has its drawbacks, Gilja said. "From an engineering perspective, the process of isolating single neurons is difficult, due to minute physical movements between the electrode and nearby neurons, making it error-prone," he said. ReFIT focuses on small groups of neurons instead of single neurons.

By abandoning the single-neuron approach, the team also reaped a surprising benefit: performance longevity. Neural implant systems that are fine-tuned to specific neurons degrade over time. It is a common belief in the field that after six months to a year, they can no longer accurately interpret the brain's intended movement. Gilja said the Stanford system is working very well more than four years later.

"Despite great progress in brain-computer interfaces to control the movement of devices such as prosthetic limbs, we've been left so far with halting, jerky, Etch-a-Sketch-like movements. Dr. Shenoy's study is a big step toward clinically useful brain-machine technology that have faster, smoother, more natural movements," said James Gnadt, PhD, a program director in Systems and Cognitive Neuroscience at the National Institute of Neurological Disorders and Stroke, part of the National Institutes of Health.

For the time being, the team has been focused on improving cursor movement rather than the creation of robotic limbs, but that is not out of the question, Gilja said. Near term, precise, accurate control of a cursor is a simplified task with enormous value for paralyzed people.

"We think we have a good chance of giving them something very useful," he said. The team is now translating these innovations to paralyzed people as part of a clinical trial.