August 28, 2013 report

Researchers find humans process echo location and echo suppression differently

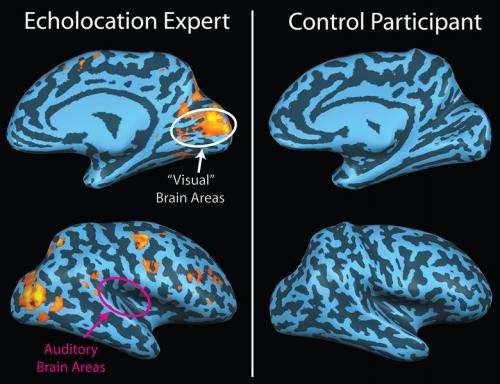

(Medical Xpress)—A trio of German researchers has found that human beings listening to sounds that have a corresponding echo, process the sounds differently depending on whether they are using echo location or echo suppression. In their paper published in Proceedings of the Royal Society B, the team describes experiments with volunteers they undertook to understand how humans process echo sounds and results obtained in analyzing their observations.

Echo location is where a person or animal emits a sound and then listens for the echo as the sound is bounced back to them. By noting the time lag and change in volume and tone of the bounced sound, echo locaters are able "see" objects that are around them, and in some cases to distinguish between them. Scientists have known for years that humans, especially those that lose their sight, have some degree of echo location abilities. What's not been clear is how people process echoes when they can see what is going on around them, versus when they cannot. To find out more, the research team enlisted the assistance of several sighted volunteers.

In the first experiment, the volunteers were fitted with headphones and asked to discriminate between sounds that appeared to come from a specific source and then an echo which was made to sound as if had come from a different direction. The team called this a "listening" experiment.

In the second experiment, the researchers fitted the volunteers with both headphones and a microphone. The volunteers were asked to make a clicking noise—which was caused to sound like it was being bounced off of an object providing an echo—and then to discriminate between the original sound and the echo produced. The team called this an "echolocation" experiment.

In analyzing how the volunteers responded to the two experiments, the researchers found that in the listening experiment, the volunteers tended to ignore the echo sound altogether, choosing to focus on the original sound and the direction from which it was coming. In the echolocation experiment, the volunteers noted both the sound they made themselves and the echo that was produced. This suggests, they say, that humans automatically suppress a directional response to echo sounds when they are able to see the world around them. When making the sounds themselves, however, their brains focuses on both sounds and in so doing, is able to recognize the difference in tone, volume and direction, allowing for creating a mental map to recognize objects that cannot be seen visually.

More information: Echolocation versus echo suppression in humans, Published 28 August 2013 DOI: 10.1098/rspb.2013.1428

Abstract

Several studies have shown that blind humans can gather spatial information through echolocation. However, when localizing sound sources, the precedence effect suppresses spatial information of echoes, and thereby conflicts with effective echolocation. This study investigates the interaction of echolocation and echo suppression in terms of discrimination suppression in virtual acoustic space. In the 'Listening' experiment, sighted subjects discriminated between positions of a single sound source, the leading or the lagging of two sources, respectively. In the 'Echolocation' experiment, the sources were replaced by reflectors. Here, the same subjects evaluated echoes generated in real time from self-produced vocalizations and thereby discriminated between positions of a single reflector, the leading or the lagging of two reflectors, respectively. Two key results were observed. First, sighted subjects can learn to discriminate positions of reflective surfaces echo-acoustically with accuracy comparable to sound source discrimination. Second, in the Listening experiment, the presence of the leading source affected discrimination of lagging sources much more than vice versa. In the Echolocation experiment, however, the presence of both the lead and the lag strongly affected discrimination. These data show that the classically described asymmetry in the perception of leading and lagging sounds is strongly diminished in an echolocation task. Additional control experiments showed that the effect is owing to both the direct sound of the vocalization that precedes the echoes and owing to the fact that the subjects actively vocalize in the echolocation task.

© 2013 Medical Xpress