Everyone has different 'bad spots' in their vision

The ability to distinguish objects in peripheral vision varies significantly between individuals, finds new research from UCL, Paris Descartes University and Dartmouth College, USA. For example, some people are better at spotting things above their centre of vision while others are better at spotting things off to the right.

The research, published in Proceedings of the National Academy of Sciences and funded by the Medical Research Council (MRC), the European Research Council and Dartmouth College, shows that on average we are worse at spotting objects in crowded environments when they are above or below eye level, although the extent to which this happens varies between individuals.

"If you're driving a truck with a high cabin and looking straight ahead, you're less likely to notice pedestrians or cyclists at street level in your peripheral vision than if you were lower down with those same pedestrians on the left and right," explains lead author Dr John Greenwood (UCL Experimental Psychology). "A visually cluttered environment like a busy city road makes it even more difficult. As well as the physical blind spots on vehicles, people behind the wheel will also have different areas where their peripheral vision is better or worse."

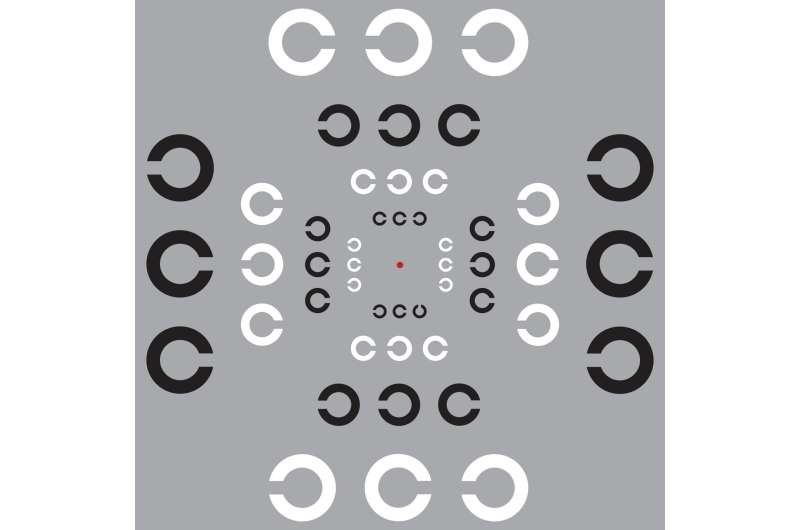

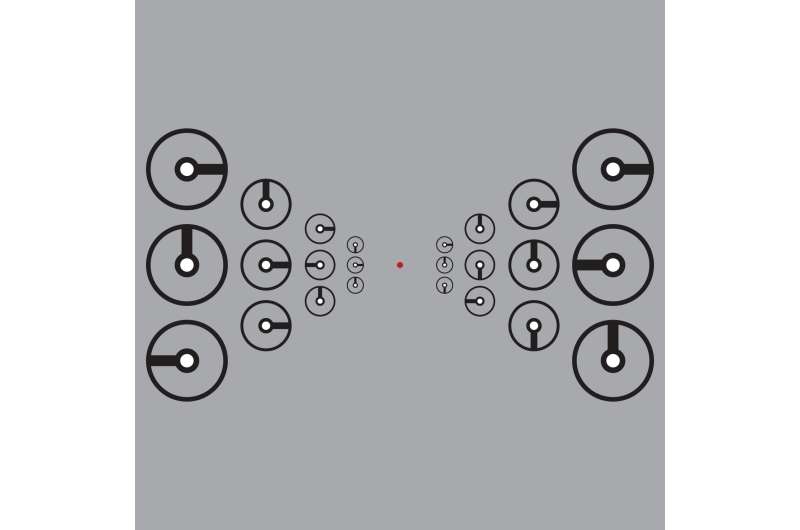

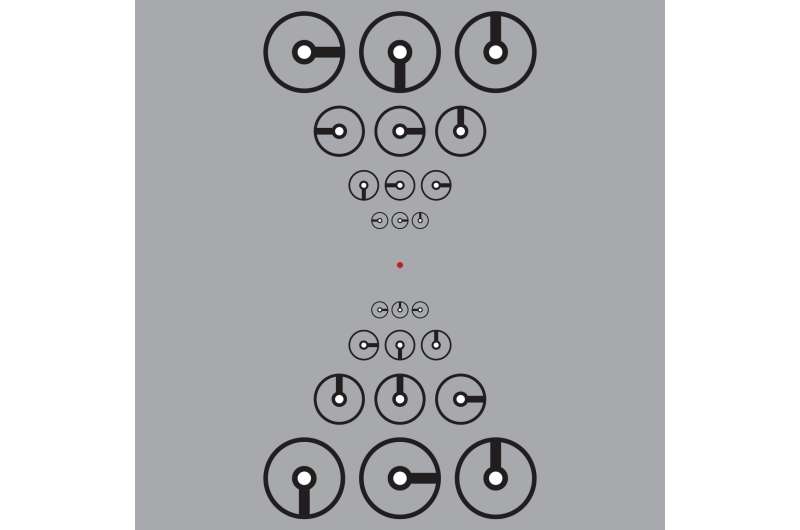

The study involved 12 volunteers who took part in a series of perception tests over several years. The key experiment involved focusing on a point in the centre of the screen while images of clocks were shown in different parts of the visual field, either a clock alone or with two other clocks next to it. It is more difficult to tell the time on the central clock when the surrounding clocks are closer to it, as the scene is more visually 'cluttered'. This is known as 'visual crowding'.

Participants' ability to successfully identify the central clock in a cluttered scene varied significantly, with different people better at spotting it in different positions. On average, most participants were weakest with their upper peripheral vision, followed by the lower peripheral vision. There was no significant difference between left and right on average, with some volunteers better on the left and others on the right.

In the same task, participants were also asked to move their eyes to where the centre of the middle clock had been once it disappeared. There was a strong correlation between the amount of disruption from clutter and the ability of individuals to make precise eye movements to those same locations.

"Everyone has their own pattern of sensitivity, with islands of poor vision and other regions of good vision," explains Dr Greenwood. "If you're looking for your keys, then this profile will affect your ability to find them. For example, if your keys are on a table to the left of where you're focusing, the presence of books and papers on the table may stop you spotting the keys. Someone with strong left-sided vision could spot the keys even if they're right next to the book, whereas someone else might not notice the keys unless they're a foot away from the book. There is substantial variation between different people."

These 'islands' of poor vision were apparent across several tasks tested by the researchers, despite each relying on different processes in the brain. The implication is that these differences in peripheral vision could occur very early in the visual system, possibly beginning as early as the retina. It is unclear whether these differences are due to genetics or environment, but they are observed consistently over time.

"What is striking is the consistency of the pattern from the first levels of vision up to the highest levels, processing that involves very different areas of the brain," explains senior author Professor Patrick Cavanagh (Dartmouth College). "We propose that these variations originate at the first levels of vision very early in our development where simple features like edges and colours are registered, and then are inherited by higher levels as the rest of the brain wires itself up to deal with the information being sent from the eyes. The higher levels deal with recognizing objects, faces, and actions, and directing our eyes toward areas of interest."

Most people do not experience visual crowding in the centre of their vision, unlike the periphery, however in some conditions central vision is also affected. In amblyopia, also known as 'lazy eye', the brain does not interpret visual signals from one eye properly, leading to an increase in visual crowding. In dyslexia, some research has shown that people with the condition find it easier to read words when the letter spacing is increased to reduce visual crowding. Similarly, visual crowding effects may be one of the early symptoms of Posterior Cortical Atrophy, a form of dementia that predominantly affects vision. Crowding is also a factor in macular degeneration, the most common form of blindness, where the centre of the eye is affected first and so patients must rely on their peripheral vision to see.

"Our new paper helps us to better understand the mechanisms that cause visual crowding and where these occur in the visual system," says Professor Cavanagh. "In the long term, we hope that this will help with the development of better treatment strategies for a wide range of conditions that limits the usefulness of vision for millions of people worldwide."

More information: Topology of spatial vision: Shared variations in crowding, saccadic precision, and spatial localization, Proceedings of the National Academy of Sciences (2017). www.pnas.org/cgi/doi/10.1073/pnas.1615504114