Visual worlds in mirror and glass

The clear, colorful rays of light characteristic of precious metals and jewels give us a rich sense of their quality. This is due to our ability to perceive materials, which provides an estimate of the surface condition and material of objects. Humans tend to attribute value to the phenomenon of light reflecting from or passing through the surface of an object in a complex manner. In fact, humans as a species have sought good material properties since the dawn of time. Based on this knowledge, researchers in various fields of study including neuroscience, psychology and engineering have strived to uncover the processes related to material perception that occur in our brain.

Reflective materials are those such as mirrors and polished metals that have a surface on which light is specularly reflected. Transparent materials are materials such as glass and ice through which light permeates and refracts. The images that appear on the surfaces of these materials greatly vary in complex ways depending on what surrounds them. Because these objects can produce a countless number of images, the way in which humans distinguish between mirror and glass was unknown.

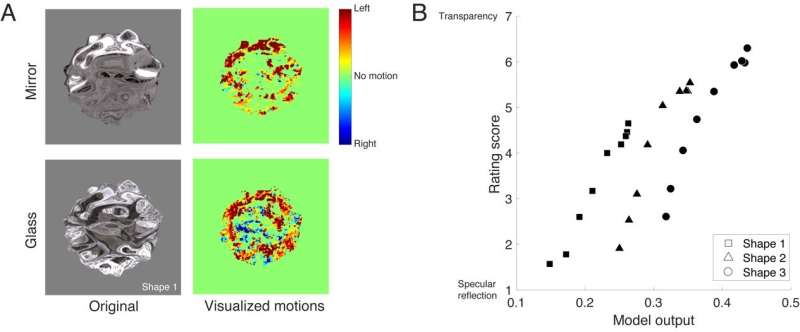

In everyday life, a viewed object and the viewer are hardly ever stationary at the same time. As such, it was believed that the information on visual perception sent to the brain when an object is viewed also included latent dynamic information. For instance, when a mirror (reflective material) rotates, humans are only able to perceive dynamic information on the front side of the object. However, when glass (transparent material) rotates, humans can perceive dynamic information at both the front and rear (opposite information) of the object because the object is transparent (FIG. 1A, moving picture 1).

The research team came to the hypothesis that humans discriminate between reflective/transparent materials by using dynamic information from those materials as a cue among a vast possible selection of information. The team empirically measured the degree to which people perceive and discriminate between moving objects made of reflective and transparent materials, and used that data to develop and test a model for discriminating between reflective/transparent materials (FIG. 1B). This model correlates closely with human perception and suggests that material perception in humans can be accurately predicted.

Lead author and Ph.D. student Hideki Tamura explains: "Because humans can distinguish between the various materials that are around them such as metal, glass and wood with very high accuracy, we initially thought that the brain carries out complex information processing to achieve this task. However, our brains may actually only perform simple information processing using cues that summarize the information we need. This discovery is expected to be applied to material property reproduction technology based on the mechanism of our brains."

Research team leader Professor Shigeki Nakauchi says, "We come across mirrors and glass all the time in our daily lives, but they are actually very peculiar materials in terms of material perception because they do not possess any color and merely distort whatever is around them. We are able to perceive and enjoy mirror-like and glass-like properties and the other various materials in our world by way of dynamic information, which initially seemed unrelated to this perception."

This research suggests that humans use efficient cues when discriminating between materials. More specifically, humans can apply these cues to estimate or express the material state of an object using summarized information without needing to use all the information in, for example, a moving picture. The results of this research are therefore expected to be used in material property measurement systems and material reproduction technology that take insight from the mechanism of visual perception.

More information: Hideki Tamura et al, Dynamic Visual Cues for Differentiating Mirror and Glass, Scientific Reports (2018). DOI: 10.1038/s41598-018-26720-x