May 18, 2020 feature

Investigating neural mechanisms underlying individual differences in perception

Researchers at the National Brain Research Centre in Haryana, India, have recently carried out a study exploring the neural mechanisms that may underlie differences in how different people perceive multisensory stimuli. Their paper, published in the European Journal of Neuroscience, introduces a biophysical model that could link variability in the structure and brain function of different individuals to their performance in perception-related tasks.

"Our lab investigates neural mechanisms operational in large-scale functional brain networks during perception and action," Arpan Banerjee, one of the researchers who carried out the study, told MedicalXpress. "This is a revisionist view from studying brain areas in silos, operational during specific tasks involving cognition."

Past studies investigating human multisensory capabilities identified a number of brain areas that could be associated with the processing of stimuli. Yet how these areas actually communicate with one another remains an open question, which is currently being explored by several research teams worldwide.

One specific aspect of this question that remains elusive is whether there is a particular brain rhythm with which communication between these brain areas occurs, which may differ between frequent perceivers and rare perceivers of a common illusion called the McGurk effect. The McGurk effect occurs when people are exposed to a sound paired with a visual stimulus associated with a different sound, which can lead to erratic perception of what they are hearing. The extent to which people are 'fooled' by this illusion can vary, yet the neural mechanisms underlying this variability in perception are not entirely clear.

"The goal of this study was to identify a metric (neuromarker) that captures the inter-individual variability on this task and provide an explanation of this marker using realistic computer simulations of brain dynamics," Banerjee said.

Signal processing using mathematical functions such as Fourier transforms has been widely used in past electroencephalography (EEG) studies. These techniques have proved particularly useful for identifying rhythms that are specific to particular areas of the brain or capture frequencies through which two brain areas communicate with each other.

"EEG techniques have a limitation, namely that they measure activity occurring in the cortex indirectly and outside the head, at a scalp level," Banerjee said. "Therefore, advanced algorithms are needed to uncover the source signals, which also requires aligning of data with structural brain maps captured using MRI techniques. We hypothesized that metrics like global coherence, pioneered by research groups led by Scott Kelso, Steve Bressler and Guido Nolte, can be useful to track the coordination dynamics among large brain networks."

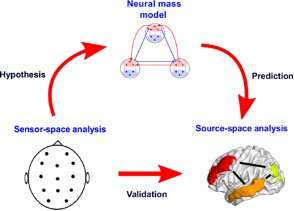

Banerjee and his colleagues used advanced algorithms to predict source level metrics for communications that take place between different brain areas, based on realistic simulations of large-scale brain networks. Finally, they identified source-level activations of brain areas involved in the perception of McGurk illusions, employing beamforming techniques rooted in radiotelescopy on EEG data collected from people who were presented with these illusions, using a renowned method called Dynamic Imaging of Cortical Sources (DICS).

This procedure allowed them to compute source-level network measures directly from the raw EEG data and subsequently compare neural mass model predictions with empirical evidence of brain coordination dynamics. Ultimately, the researchers found that the susceptibility of participants to the McGurk illusion was negatively correlated to specific patterns of neural activity, namely their alpha-band global coherence.

"This approach of prediction via mass model and validation by source metrics is a novel tool used in this study and never been applied before, at least to the best of our knowledge," Banerjee said. "Thus, in addition to identifying the global alpha coherence in rare-perceivers in the empirical side, our work introduces innovations in the methodological domain."

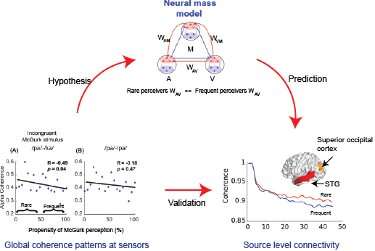

The new biophysical model introduced by Banerjee and his colleagues describes a series of neural masses and functional coupling patterns among these masses that give rise to the large-scale brain network dynamics occurring while an individual is simultaneously perceiving different kinds of sensory stimuli (e.g. auditory and visual). These neural masses capture the average activity of the primary brain areas involved in the processing of auditory, visual and multisensory stimuli.

"Very simplistically speaking the individual brain areas were distinguishable by their processing speeds supported by collation of empirical evidences from animal and anatomical studies," Dipanjan Roy, one of the researchers who carried out the study, told MedicalXpress. "The specific functional couplings and their combinations simulated in this work are based on realistic audio-visual (AV), auditory-multisensory (AM) and visual-multisensory (VM) brain regions can recreate the global change in coherence dynamics during cross modal perception."

The model devised by the researchers makes a number of predictions. The most important one is that rare-perceivers of the McGurk illusion (i.e. those who are less susceptible to it) present a higher direct auditory-visual coupling than frequent perceivers. This prediction was later also confirmed by source-connectivity maps used by Banerjee, Roy and their colleagues.

"Our study significantly contributes to the scientific literature in cross-modal perception, as it previously remained quite elusive in defining how the large-scale brain network dynamics between early sensory and higher order cognitive areas can give rise to perception and the variability thereof in various multisensory perceptual processing across participants," Roy said. "This finding is of course not limited to audio-visual processing, but very naturally paves the way for other multisensory domains, such as, sight and sound with touch, smell, self-motion, and taste."

Past studies have found evidence that abnormal synchronization patterns in specific brain rhythms can partly contribute to multisensory deficits in elderly people or people affected by a number of mental disorders, including autism, schizophrenia and Alzheimer's disease. So far, however, researchers have been unable to clearly delineate the dynamical mechanisms that give rise to coherence and decoherence in brain frequency bands associated with multisensory processing.

Banerjee and Roy, along with their graduate students G. Vinodh Kumar and Shrey Dutta, gathered new valuable insight that could shed a light on these mechanisms, thus broadening the current understanding of neural mechanisms underpinning individual differences in perceptions. Firstly, the researchers identified an enhanced global coherence in the alpha frequency band for those who did not perceive the McGurk illusion, compared to those who did.

"Using neural mass models, we also predicted that high direct audio-visual functional connections are key to maintaining the high alpha global coherence in rare-perceivers," Roy said. "Finally, using source-level network analysis, we validated our model's predictions that indeed the source level coherences among auditory areas (left superior temporal gyrus STG) and visual areas (medial occipital cortex, MOC and superior occipital cortex, SOC) is higher in rare-perceivers of the McGurk illusion."

The recent study carried out by this team of researchers could ultimately broaden the current understanding of how the human brain integrates different types of sensory stimuli while processing them simultaneously. In the future, the methodology and model introduced by Banerjee, Roy and their colleagues could be used to investigate other forms of multisensory processing or instances in which several brain areas interact or work together, for instance areas involved in emotion and judgement. In their next studies, the researchers would also like to explore how pre-stimulus brain states that carry meaningful spontaneous brain rhythm signatures can shape brain activity after someone is presented with a given stimulus.

"For the time being we find the notion of decreased coherence (i.e., decoherence) as an important marker for audio-visual integration," Roy and Banerjee said. "However, future brain imaging studies could investigate whether decoherence is a generalized metric that captures how effectively neural ensembles interact with environmental inputs. For example, in autistic populations and people with learning disabilities, the coherence existing in spontaneous dynamics may achieve high levels of stability, such that it is difficult to disturb de-synchronize to effectuate efficient information processing. Identifying such mechanisms could inform the development of new routes to recovery."

More information: G. Vinodh Kumar et al. Biophysical mechanisms governing large‐scale brain network dynamics underlying individual‐specific variability of perception, European Journal of Neuroscience (2020). DOI: 10.1111/ejn.14747

© 2020 Science X Network