This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

trusted source

proofread

DeepWMH: A deep learning tool for accurate white matter hyperintensity segmentation

White matter hyperintensities (WMHs) on fluid-attenuated inversion recovery (FLAIR) images are imaging features in various neurological diseases and essential markers for clinical impairment and disease progression. WMHs are associated with brain aging and pathological changes in the human brain, such as Alzheimer's disease, Parkinson's disease, cerebral small vessel disease, multiple sclerosis, neuromyelitis optica spectrum disorders, and neuronal intranuclear inclusion disease.

These WMH lesions correlate with behavioral and cognitive performance and risk factors. Efficient and reproducible quantitative analysis of WMH lesions can greatly benefit clinical practice and scientific research, and automated WMH segmentation enables such analyses. Therefore, the development of accurate automated WMH segmentation algorithms is highly desirable.

The earliest works on automated WMH segmentation use simple intensity thresholding strategies. More advanced approaches analyze WMH morphology and use regression models or k-nearest neighbors for the segmentation. Recently, convolutional neural networks have also been used for the segmentation task, and some work has attempted to apply the deep learning approach without any annotated training data.

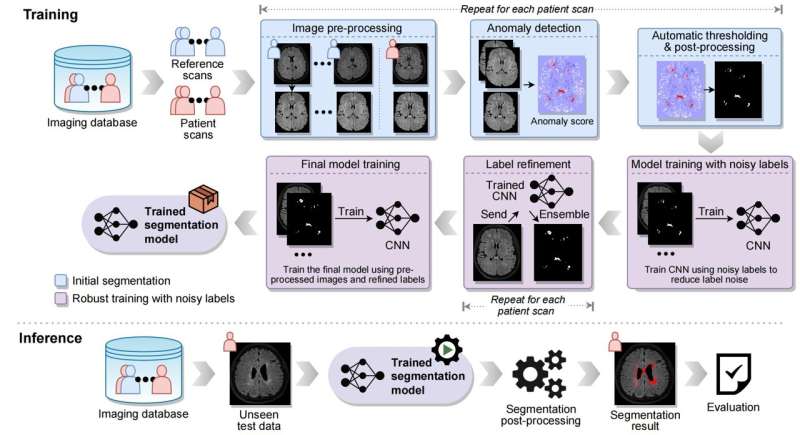

However, the methods mentioned above are either not sufficiently effective in clinical scenarios or require a large amount of annotated data. Thus, the team developed DeepWMH, an open-source, annotation-free WMH lesion segmentation tool targeted at removing the need for human annotation while achieving high segmentation accuracy.

DeepWMH was validated with 2,203 scans from nine datasets acquired with 18 scanning protocols and compared with state-of-the-art annotation-free WMH segmentation methods. DeepWMH outperforms the competing methods by a large margin. It exploits the difference between normal-appearing and patient scans and combines it with the power of deep learning, which allows accurate segmentation of WMHs without manual annotations for model training.

It incorporates anatomical knowledge about normal brain tissue to indicate potential WMHs and integrates the indication with deep learning using a training strategy that is robust to label noise. DeepWMH is fully automatic, and no hyperparameter tuning by the users is required. Its performance is stable across different datasets, in which data can vary due to different scanner models, types of brain disease, slice thicknesses, lesion locations, and lesion sizes.

DeepWMH is publicly available on GitHub. A pre-trained segmentation model is also provided so that it can be easily deployed.

DeepWMH can be easily distributed, installed, and directly applied for both clinical application and scientific research. It may also inspire annotation-free segmentation of other types of brain lesions, where anatomical knowledge can be obtained with normal-appearing brain images and spatial normalization techniques.

The research is published in the journal Science Bulletin.

More information: Chenghao Liu et al, DeepWMH: A deep learning tool for accurate white matter hyperintensity segmentation without requiring manual annotations for training, Science Bulletin (2024). DOI: 10.1016/j.scib.2024.01.034

GitHub: github.com/lchdl/DeepWMH