June 12, 2013 report

Spike frequency adaption maintains efficiency in networks of neurons

(Medical Xpress)—Sensory adaptation is a familiar phenomenon. Whether jumping into a cold pool, or driving through manure-laden air as you pass by a recently fertilized farm, an initially strong sensory experience generally tends to decrease over time. The same kind of adaption we perceive at the conscious level also occurs at the level of the individual neuron, and networks of neurons. In a paper just published in Nature Neuroscience, researchers from the EPFL in Switzerland seek to uncover some of the mechanisms of adaption in cortical pyramidal cells. Based on their electrophysiological recordings, they developed a model that describes spike-frequency adaption (SFA) in terms of a power-law decay. They attribute at least part of this adaptation to the effects of increased firing thresholds and lower membrane potentials. As many current network models ignore, or only include the effects of rapid SFA, the authors call attention to the fact that this adaption is significant on timescales of at least 20 seconds. They therefore conclude that SFA is a critical factor for maintaining the energetic efficiency of networks.

Each input synapse, of the many thousands found on a typical pyramidal cell, has tens to hundreds of individual ion channels. A single vesicle release event might therefore result in something like 70 million ions entering the synapse. In theory, this might require nearly as many ATP to be consumed in the process of pumping the ions back out. Indeed imaging studies have shown that budget for the entire neuron approaches 5 billion ATP per second. In order to source this ATP currency, the cell dispatches mitochondrial shepherds which also serve to round up and redistribute calcium that enters the cell during this activity.

Clearly any mechanism that lets a neuron do more with less would be of great value in this type of situation. SFA has been described as an energy-efficient coding method that can optimize information transfer at high signal to noise ratios. In real brains operating in real environments, that becomes an extremely murky statement. In an artificial model or in-vitro experiment, the entire dendritic input is often approximated with a single sinusoidal current input. In this case synaptic noise can just be defined as an additional modulation of that single current.

In reality, the relative rate of information transfer to the cell by any particular synapse would be negligible if every synapse is assumed to be potentially active at once. It is therefore likely that significant adaptation occurs not only at the level of the whole neuron, but also at the level of each synapse. This "significant adaptation" could mean that individual synapses, and even whole dendritic branches could be effectively deactivated or otherwise shunted.

The authors suggest that the combined action of many adapting or inactivating ion channels could account for SFA. Generally speaking, these effects occur on a relatively fast timescale. The authors also note that, "a match of the relative strength of different currents implies a fine-tuned regulation of gene expression levels."

Fine-tuning indeed, but perhaps not necessary when you already have the mitochondria standing by to, in effect, warm up or cool off synapses. With a significant fraction of mitochondria being highly motile, the precise ratio of mitochondria to synapse has generally been tough to nail down. One way to interpret the activity and distribution of mitochondria, is that these self-contained sacks of enzymes, rather than the labile protein components of synapses, essentially become the autonomous administrators of neuron firing modes and codes—perhaps even memory itself.

SFA would seem to present somewhat of a problem for temporal coding of information, particularly if it is desirable for neurons to maintain a relatively constant rate of information transfer. The authors maintain that SFA causes a temporal decorrelation, or whitening, of a neuron's output spike train. For some front end systems, like the retina, removing correlations in stimulus features may be useful for achieving optimal encoding. While this typically is said to involve an adaptation to the statistics of the stimulus, a convincing theory of how this relates to adaption in spike frequency has yet to emerge.

To take another example, directional localization in the auditory system involves the amplification of very small differences in the timing of spikes generated from each ear. In this case, neurons in brainstem nuclei appear to act as coincidence detectors, or more accurately, anticoincidence detectors, having a certain time window. This sort of "differential amplifier for time" would therefore toss out much of information in the incoming signals, preserving only the effect of latency in the first spike or two. A concern with this kind of strategy is that sometimes you end up amplifying noise. Redundancy at other levels, as is often noted, would be one potential solution to this problem.

When auditory nerve neurons can no longer track the sound waveform, such as when the stimulus moves beyond a certain frequency, the code needs to adapt. If downstream neurons performing coincidence based localization suddenly get sent a new code, they in turn need to adapt to this new code in some way. In moving toward slower slower spike rates over time as a general strategy, neurons may be forced to continually adopt new methods of stimulus encoding at each level.

The researchers hold that information transfer rate is maintained even as neurons adapt slower spike rates. Perhaps an example of this behavior can be given with a seaside analogy. When a seagull tries to land atop a telephone pole already occupied by another seagull, there is a near instantaneous, but information rich interaction which determines which gull will remain atop the pole, and which will fly away.

The victorious gull invariably is compelled to emit a handful a punctate calls which rapidly adapt a slower frequency over the course of the next few seconds. In this case, those later occurring spikes, or calls rather, actually seem to be the most interesting. They typically contain the most variability in terms of intensity, pitch, timing, total number, and perhaps inexplicably, are generally accompanied by an exaggerated head toss. As the "losing" gull flies away, those last one or two calls the victor sends to it, and the larger community, while appearing to be the most fortuitously given, seem to have the highest investment.

Perhaps one way brains handle SFA at the network level, is that if there is any decrease in rate of information transfer, they simply deal with it. We know, for example, that cold water is going to become less of a shock over time, and that other attentional mechanisms will be brought into play to insure we don't forget it is still cold. While adaption (and for that matter, its less-frequent counterpart, sensitization) in neurons can not completely account for its parallel adaptive effect occurring at higher perceptual levels, it is certainly should be counted as one of the main mechanisms.

More information: Temporal whitening by power-law adaptation in neocortical neurons, Nature Neuroscience (2013) doi:10.1038/nn.3431

Abstract

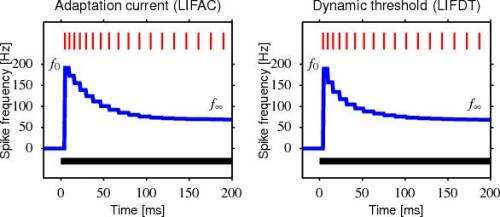

Spike-frequency adaptation (SFA) is widespread in the CNS, but its function remains unclear. In neocortical pyramidal neurons, adaptation manifests itself by an increase in the firing threshold and by adaptation currents triggered after each spike. Combining electrophysiological recordings in mice with modeling, we found that these adaptation processes lasted for more than 20 s and decayed over multiple timescales according to a power law. The power-law decay associated with adaptation mirrored and canceled the temporal correlations of input current received in vivo at the somata of layer 2/3 somatosensory pyramidal neurons. These findings suggest that, in the cortex, SFA causes temporal decorrelation of output spikes (temporal whitening), an energy-efficient coding procedure that, at high signal-to-noise ratio, improves the information transfer.

© 2013 Phys.org