Robots provide insight into human perception

Research using a robot designed to express human emotions has revealed unexpected insights into how our perception is affected by anthropomorphism, or giving human characteristics to non-human animals or things.

An international group of researchers, including a team from the Wellcome Trust Centre for Neuroimaging, University College London, conducted the study. They found that when volunteers were focused on the emotions shown by the robot, their brain responses started to look like those made to humans showing the same emotions. This finding may be important in helping any future developments of personal robots, as it will help designers to create robots that people will interact with more easily.

"My work looks for both a better understanding of the human perception system, and a better understanding of what features could make a robot more agreeable to interact with," says lead researcher Dr Thierry Chaminade from the Wellcome Trust Centre for Neuroimaging. "This is quite interesting, because the way you present your robot can make a huge difference in how people perceive it."

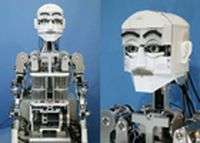

For the study, researchers from a number of countries including the UK, Italy and Japan created a robot called WE4-RII, which makes movements and facial expressions of human emotions. Then, 13 male volunteers watched short video clips of either a human or the robot expressing a particular emotion.

Each clip showed the face and upper body of the human or robot, moving from a neutral expression to joy, anger, disgust or speech. Participants had to rate on a sliding scale how much emotion or how much movement the face showed. As the volunteers rated the clips, their brain was scanned by functional magnetic resonance imaging (fMRI), a technique that allows brain activity to be monitored noninvasively in different parts of the brain.

As the researchers predicted, when the participants viewed the clips of humans, their brains showed more activity in areas that have so-called mirror properties. Mirror neurons are brain cells that send signals both when a person performs an action and when they view the same action being performed by another.

These neurons are thought to play a major role in empathy, so the researchers expected that the participants would show such activity when they viewed clips of a human moving and showing emotion.

It was also predicted that the participants would respond to the robot, but not as strongly as they did to humans, as the robot is clearly mechanical. However, the participants showed a greater response to the robot when they were required to rate its emotions, compared to when they rated its movements.

The researchers think that when the participants judged the level of emotion shown by the robot, they did not notice as much that it was mechanical and so they were more responsive to it. "The robots provided extremely interesting tools to investigate the perception of actions," says Dr Chaminade.

"Perceiving actions is the first element of interacting with someone else - perceiving speech, perceiving what they're doing... It was completely unknown if robots being humanoids would be perceived as being human or not, which in itself opens an interesting question for using humanoids for an unbelievable number of applications."

More information: Chaminade T et al. Brain response to a humanoid robot in areas implicated in the perception of human emotional gestures. PLoS One. 2010 Jul 21;5(7):e11577.