Brain's 'thesaurus' mapped to help decode inner thoughts

What if a map of the brain could help us decode people's inner thoughts?

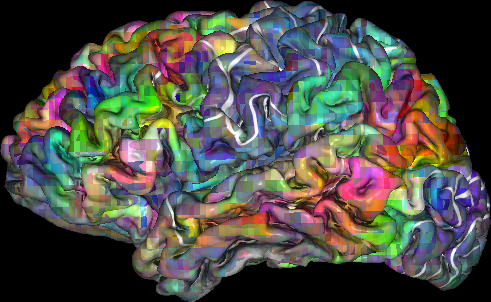

Scientists at the University of California, Berkeley, have taken a step in that direction by building a "semantic atlas" that shows in vivid colors and multiple dimensions how the human brain organizes language. The atlas identifies brain areas that respond to words that have similar meanings.

The findings, to be published April 28, 2016 in the journal Nature, are based on a brain imaging study that recorded neural activity while study volunteers listened to stories from the "Moth Radio Hour." They show that at least one-third of the brain's cerebral cortex, including areas dedicated to high-level cognition, is involved in language processing.

Notably, the study found that different people share similar language maps: "The similarity in semantic topography across different subjects is really surprising," said study lead author Alex Huth, a postdoctoral researcher in neuroscience at UC Berkeley.

Detailed maps showing how the brain organizes different words by their meanings could eventually help give voice to those who cannot speak, such as victims of stroke or brain damage, or motor neuron diseases such as ALS.

While mind-reading technology remains far off on the horizon, charting how language is organized in the brain brings the decoding of inner dialogue a step closer to reality, the researchers said.

For example, clinicians could track the brain activity of patients who have difficulty communicating and then match that data to semantic language maps to determine what their patients are trying to express. Another potential application is a decoder that translates what you say into another language as you speak.

"To be able to map out semantic representations at this level of detail is a stunning accomplishment," said Kenneth Whang, a program director in the National Science Foundation's Information and Intelligent Systems division. "In addition, they are showing how data-driven computational methods can help us understand the brain at the level of richness and complexity that we associate with human cognitive processes."

Huth and six other native English-speakers served as subjects for the experiment, which required volunteers to remain still inside the functional Magnetic Resonance Imaging scanner for hours at a time.

Each study participant's brain blood flow was measured as they listened, with eyes closed and headphones on, to more than two hours of stories from the "Moth Radio Hour," a public radio show in which people recount humorous and/or poignant autobiographical experiences.

Their brain imaging data were then matched against time-coded, phonemic transcriptions of the stories. Phonemes are units of sound that distinguish one word from another.

That information was then fed into a word-embedding algorithm that scored words according to how closely they are related semantically.

The results were converted into a thesaurus-like map where the words are arranged on the flattened cortices of the left and right hemispheres of the brain rather than on the pages of a book. Words were grouped under various headings: visual, tactile, numeric, locational, abstract, temporal, professional, violent, communal, mental, emotional and social.

Not surprisingly, the maps show that many areas of the human brain represent language that describes people and social relations rather than abstract concepts.

"Our semantic models are good at predicting responses to language in several big swaths of cortex," Huth said. "But we also get the fine-grained information that tells us what kind of information is represented in each brain area. That's why these maps are so exciting and hold so much potential."

"Although the maps are broadly consistent across individuals, there are also substantial individual differences," said study senior author Jack Gallant, a UC Berkeley neuroscientist. "We will need to conduct further studies across a larger, more diverse sample of people before we will be able to map these individual differences in detail."

More information: Alexander G. Huth et al. Natural speech reveals the semantic maps that tile human cerebral cortex, Nature (2016). DOI: 10.1038/nature17637