May 22, 2024 report

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

Brain implant in conjunction with AI app allows nearly mute man to speak in two languages

A team of neurosurgeons and AI specialists at the University of California, San Francisco, has found some success in restoring speech to a patient who lost the ability after a stroke.

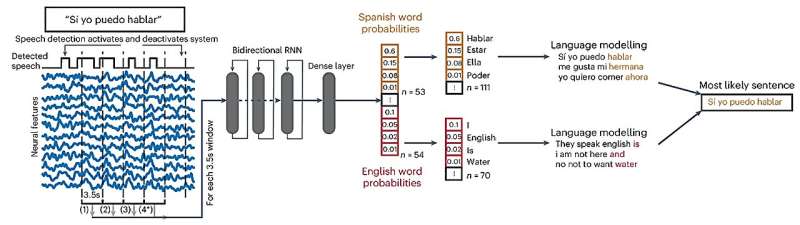

In their study, published in the journal Nature Biomedical Engineering, the group implanted a brain-computer interface (BCI) inside the skull of a man nicknamed "Pancho" and applied AI techniques to the data it provided to help the patient speak again—in two languages.

Previous trials have shown that it is possible to implant a probe onto the surface of the brain to read brain waves, and then to apply learning techniques to the data it provides as a means to convert some of the brain waves to words. In this new effort, the research team has taken such work a step further by adding another element—a second language.

The volunteer, Pancho, was a native Spanish speaker. He lost most of that ability when he had a stroke at age 20. Several years later, he learned to read and convert words in his thoughts to English.

More recently, he participated in the research project, which applied a lattice of electrodes to the surface of a part of his brain that is responsible for language processing; a connector in his skull allowed the BCI to connect to a computer system.

Over the following three years, Pancho underwent training. He was shown words on a computer screen and was then asked to repeat them in his mind. As he did so, the probe read his brain waves and attempted to convert them to the word he was reading. As part of the training, Pancho was shown both Spanish and English words. An LLM assisted in deciphering and conversion and reduced the number of errors.

The system demonstrated 88% accuracy in determining when Pancho was speaking in Spanish versus English, and 75% in decoding words overall. The researchers note that it was enough to allow him to hold conversations with the research team.

More information: Alexander B. Silva et al, A bilingual speech neuroprosthesis driven by cortical articulatory representations shared between languages, Nature Biomedical Engineering (2024). DOI: 10.1038/s41551-024-01207-5

© 2024 Science X Network