Artificial brains learn to adapt

For every thought or behavior, the brain erupts in a riot of activity, as thousands of cells communicate via electrical and chemical signals. Each nerve cell influences others within an intricate, interconnected neural network. And connections between brain cells change over time in response to our environment.

Despite supercomputer advances, the human brain remains the most flexible, efficient information processing device in the world. Its exceptional performance inspires researchers to study and imitate it as an ideal of computing power.

Artificial neural networks

Computer models built to replicate how the brain processes, memorizes and/or retrieves information are called artificial neural networks. For decades, engineers and computer scientists have used artificial neural networks as an effective tool in many real-world problems involving tasks such as classification, estimation and control.

However, artificial neural networks do not take into consideration some of the basic characteristics of the human brain such as signal transmission delays between neurons, membrane potentials and synaptic currents.

A new generation of neural network models—called spiking neural networks—are designed to better model the dynamics of the brain, where neurons initiate signals to other neurons in their networks with a rapid spike in cell voltage. In modeling biological neurons, spiking neural networks may have the potential to mimick brain activities in simulations, enabling researchers to investigate neural networks in a biological context.

With funding from the National Science Foundation, Silvia Ferrari of the Laboratory for Intelligent Systems and Controls at Duke University uses a new variation of spiking neural networks to better replicate the behavioral learning processes of mammalian brains.

Behavioral learning involves the use of sensory feedback, such as vision, touch and sound, to improve motor performance and enable people to respond and quickly adapt to their changing environment.

"Although existing engineering systems are very effective at controlling dynamics, they are not yet capable of handling unpredicted damages and failures handled by biological brains," Ferrari said.

How to teach an artificial brain

Ferrari's team is applying the spiking neural network model of learning on the fly to complex, critical engineering systems, such as aircraft and power plants, with the goal of making them safer, more cost-efficient and easier to operate.

The team has constructed an algorithm that teaches spiking neural networks which information is relevant and how important each factor is to the overall goal. Using computer simulations, they've demonstrated the algorithm on aircraft flight control and robot navigation.

They started, however, with an insect.

"Our method has been tested by training a virtual insect to navigate in an unknown terrain and find foods," said Xu Zhang, a Ph.D. candidate who works on training the spiking neural network. "The nervous system was modeled by a large spiking neural network with unknown and random synaptic connections among those neurons."

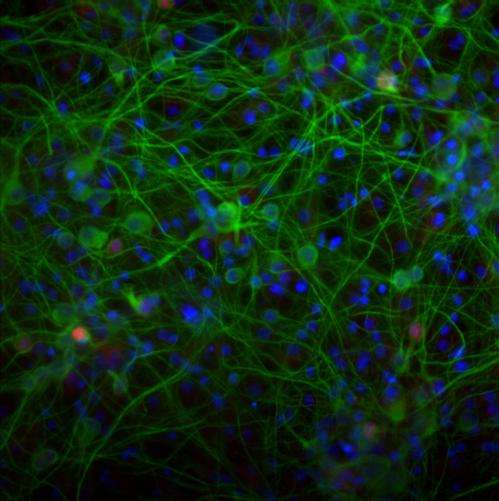

Having tested their algorithm in computer simulations, they now are in the process of testing it biologically.

To do so, they will use lab-grown brain cells genetically altered to respond to certain types of light. This technique, called optogenetics, allows researchers to control how nerve cells communicate. When the light pattern changes, the neural activity changes.

The researchers hope to observe that the living neural network adapts over time to the light patterns and therefore have the ability to store and retrieve sensory information, just as human neuronal networks do.

Large-scale applications of small-scale findings

Uncovering the fundamental mechanisms responsible for the brain's learning processes can potentially yield insights into how humans learn—and make an everyday difference in people's lives.

Such insights may advance the development of certain artificial devices that can substitute for certain motor, sensory or cognitive abilities, particularly prosthetics that respond to feedback from the user and the environment. People with Parkinson's disease and epilepsy have already benefited from these types of devices.

"One of the most significant challenges in reverse-engineering the brain is to close the knowledge gap that exists between our understanding of biophysical models of neuron-level activity and the synaptic plasticity mechanisms that drive meaningful learning," said Greg Foderaro, a postdoctoral fellow involved the the research.

"We believe that by considering the networks at several levels—from computation to cell cultures to brains—we can greatly expand our understanding of the system of sensory and motor functions, as well as making a large step towards understanding the brain as a whole."