Sounds and words are processed separately and simultaneously in the brain

After years of research, neuroscientists have discovered a new pathway in the human brain that processes the sounds of language. The findings, reported August 18 in the journal Cell, suggest that auditory and speech processing occur in parallel, contradicting a long-held theory that the brain processed acoustic information then transformed it into linguistic information.

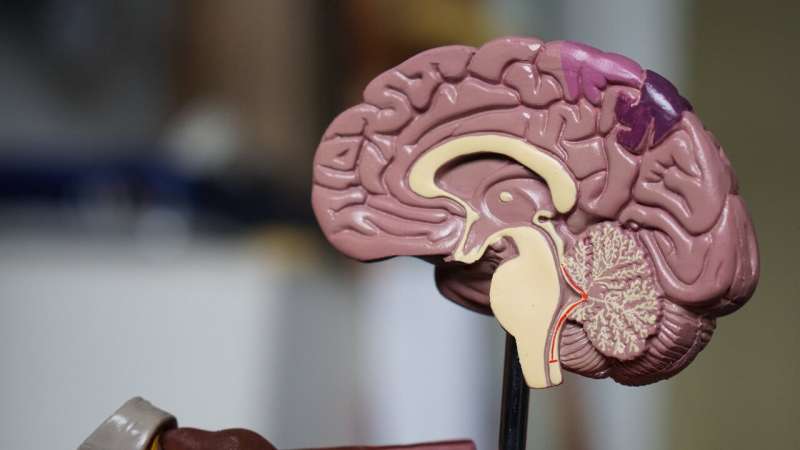

Sounds of language, upon reaching the ears, are converted into electrical signals by the cochlea and sent to a brain region called the auditory cortex on the temporal lobe. For decades, scientists have thought that speech processing in the auditory cortex followed a serial pathway, similar to an assembly line in a factory. It was thought that first, the primary auditory cortex processes the simple acoustic information, such as frequencies of sounds. Then, an adjacent region, called the superior temporal gyrus (STG), extracts features more important to speech, like consonants and vowels, transforming sounds into meaningful words.

But direct evidence for this theory has been lacking as it requires very detailed neurophysiological recordings from the entire auditory cortex with extremely high spatiotemporal resolution. This is challenging, because the primary auditory cortex is located deep in the cleft that separates the frontal and temporal lobes of the human brain.

"So, we went into this study, hoping to find evidence for that—the transformation of the low-level representation of sounds into the high-level representation of words," says neuroscientist and neurosurgeon Edward Chang at the University of California, San Francisco.

Over the course of seven years, Chang and his team have studied nine participants who had to undergo brain surgeries for medical reasons, such as to remove a tumor or locate a seizure focus. For these procedures, arrays of small electrodes were placed to cover their entire auditory cortex to collect neural signals for language and seizure mapping. The participants also volunteered to have the recordings analyzed to understand how the auditory cortex processes speech sounds.

"This study is the first time that we could cover all of these areas simultaneously directly from the brain surface and study the transformation of sounds to words," Chang says. Previous attempts to study the region's activities largely involved inserting a wire into the area, which could only reveal the signals at a limited number of spots.

When the played short phrases and sentences for the participants, the researchers were expecting to find a flow of information from the primary auditory cortex to the adjacent STG, as the traditional model proposes. If that's the case, the two areas should be activated one after the other.

Surprisingly, the team found that some areas located in the STG responded as fast as the primary auditory cortex when sentences were played, suggesting that both areas started processing acoustic information at the same time.

Additionally, as part of clinical language mapping, reseaarchers stimulated the participants' primary auditory cortex with small electric currents. If speech processing follows a serial pathway as the traditional model suggests, the stimuli would likely distort the patients' perception of speech. On the contrary, while participants experienced auditory noise hallucinations induced by the stimuli, they were still able to clearly hear and repeat words said to them. However, when the STG was stimulated, the participants reported that they could hear people speaking, "but can't make out the words."

"In fact, one of the patients said that it sounded like the syllables were being swapped in the words," Chang says.

The latest evidence suggests the traditional hierarchy model of speech processing is over-simplified and likely incorrect. The researchers speculate that the STG may function independently from—instead of as a next step of—processing in the primary auditory cortex.

The parallel nature of speech processing may give doctors new ideas for how to treat conditions such as dyslexia, where children have trouble identifying speech sounds.

"While this is important step forward, we don't understand this parallel auditory system very well yet. The findings suggest that the routing of sound information could be very different than we ever imagined. It certainly raises more questions than it answers," Chang says.

More information: Cell, Hamilton et al.: "Parallel and distributed encoding of speech across human auditory cortex" www.cell.com/cell/fulltext/S0092-8674(21)00878-3 , DOI: 10.1016/j.cell.2021.07.019