Neuroscientists discover a brain signal that indicates whether speech has been understood

Neuroscientists from Trinity College Dublin and the University of Rochester have identified a specific brain signal associated with the conversion of speech into understanding. The signal is present when the listener has understood what they have heard, but it is absent when they either did not understand, or weren't paying attention.

The uniqueness of the signal means that it could have a number of potential applications, such as tracking language development in infants, assessing brain function in unresponsive patients, or determining the early onset of dementia in older persons.

During our everyday interactions, we routinely speak at rates of 120 - 200 words per minute. For listeners to understand speech at these rates - and to not lose track of the conversation - their brains must comprehend the meaning of each of these words very rapidly. It is an amazing feat of the human brain that we do this so easily—especially given that the meaning of words can vary greatly depending on the context. For example, the word bat means very different things in the following two sentences: "I saw a bat flying overhead last night"; "The baseball player hit a homerun with his favourite bat."

However, precisely how our brains compute the meaning of words in context has, until now, remained unclear. The new approach, published today in the international journal Current Biology, shows that our brains perform a rapid computation of the similarity in meaning that each word has to the words that have come immediately before it.

To discover this, the researchers began by exploiting state-of-the-art techniques that allow modern computers and smartphones to "understand" speech. These techniques are quite different to how humans operate. Human evolution has been such that babies come more or less hardwired to learn how to speak based on a relatively small number of speech examples. Computers on the other hand need a tremendous amount of training, but because they are fast, they can accomplish this training very quickly. Thus, one can train a computer by giving it a lot of examples (e.g., all of Wikipedia) and by asking it to recognise which pairs of words appear together a lot and which don't. By doing this, the computer begins to "understand" that words that appear together regularly, like "cake" and "pie", must mean something similar. And, in fact, the computer ends up with a set of numerical measures capturing how similar any word is to any other.

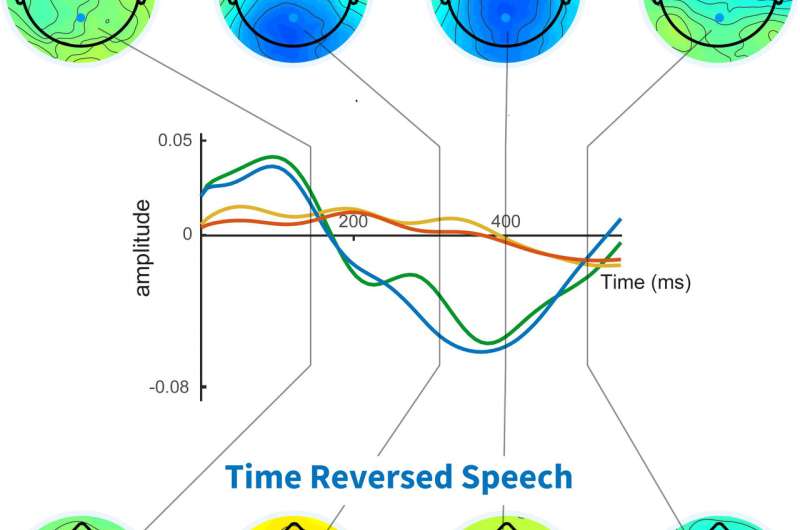

To test if human brains actually compute the similarity between words as we listen to speech, the researchers recorded electrical brainwave signals recorded from the human scalp - a technique known as electroencephalography or EEG - as participants listened to a number of audiobooks. Then, by analysing their brain activity, they identified a specific brain response that reflected how similar or different a given word was from the words that preceded it in the story.

Crucially, this signal disappeared completely when the subjects either could not understand the speech (because it was too noisy), or when they were just not paying attention to it. Thus, this signal represents an extremely sensitive measure of whether or not a person is truly understanding the speech they are hearing, and, as such, it has a number of potential important applications.

Ussher Assistant Professor in Trinity College Dublin's School of Engineering, Trinity College Institute of Neuroscience, and Trinity Centre for Bioengineering, Ed Lalor, led the research.

Professor Lalor said: "Potential applications include testing language development in infants, or determining the level of brain function in patients in a reduced state of consciousness. The presence or absence of the signal may also confirm if a person in a job that demands precision and speedy reactions - such as an air traffic controller, or soldier—has understood the instructions they have received, and it may perhaps even be useful for testing for the onset of dementia in older people based on their ability to follow a conversation."

"There is more work to be done before we fully understand the full range of computations that our brains perform when we understand speech. However, we have already begun searching for other ways that our brains might compute meaning, and how those computations differ from those performed by computers. We hope the new approach will make a real difference when applied in some of the ways we envision."

More information: Current Biology (2018). DOI: 10.1016/j.cub.2018.01.080 , www.cell.com/current-biology/f … 0960-9822(18)30146-5